On building an AI-native company

I run CodeWall, a pre-seed AI security company. We recently published research on breaking into the AI platforms of McKinsey, Bain, and BCG — but this post isn't about the offensive side. It's about how we run an AI-native company day-to-day.

About six months ago I stopped thinking of AI as a tool I use and started thinking of it as infrastructure I build on.

This is what that looks like in practice.

The Thesis

I believe AI lets one person do the work of several. Not in the breathless "AI will replace everyone" way, but in a specific, structural way that most people are underestimating.

The real bottleneck now for a founder is context switching. On any given day I move between investor conversations, coding, infrastructure, customer onboarding, product decisions, hiring, compliance, legal, partnerships, speaking events — the list goes on. Each requires loading a different mental model, a different set of relationships, and a different set of outstanding commitments. The transitions are where the waste lives. You spend twenty minutes getting back up to speed on a thread you last touched three days ago. You forget to connect something you learned in one context to a decision you're making in another. You do shallow work across ten things instead of deep work on three.

The leverage doesn't come from any single task being faster. It comes from the overhead between tasks approaching zero. A founder who can move between six workstreams without losing context in the transitions operates at a fundamentally different level than one who spends half their day reloading mental state.

That's the theory. Let me show you what that looks like in practice.

Two weeks ago I was about to hire an EA and sign three SaaS contracts. I haven't done either.

1. The Investor Dataroom

A few weeks ago I needed somewhere to send investors. The deck, the financial model, customer references, client contracts, the cap table — the standard "please fund me" pre-seed package. The usual options are an absurdly expensive dataroom SaaS platform at a few hundred dollars a month for features I'd use a fraction of, or a Google Drive link that every other founder also sends.

Neither felt right. Pre-seed isn't the moment to spend on DocSend-grade tooling for something this simple at our stage. But a Drive link is also one of the first artefacts an investor sees, and a folder of PDFs says nothing about how we work. I wanted it to feel built, not bought.

So I put Claude to work. I gave it the spec — NDA flow, per-investor email verification via Magic Links, a personalised welcome badge for each investor (yes, really), gated document viewing, audit trail of who opened what, easy new-user onboarding and file uploading — pointed it at our stack, and let it build.

Even this demo was generated by an AI agent in two minutes — redactions and all.

The whole thing took a Claude agent under an hour, end to end. And I probably spent about another 30 minutes reviewing and tweaking everything. I'm pretty sure I would've spent more time looking at options, signing up, configuring everything, etc. Here, I told Claude what to build, it had access to all of our documents, and even deployed everything to our production server including creating new DNS records.

Is this overkill? Probably. A Drive link would have done the job, and at pre-seed nobody will fault you for that. But when something costs an hour rather than a week, overkill stops being the right frame — the threshold for "worth doing yourself" drops by an order of magnitude.

And look, this wouldn't work at a large Enterprise — that's the benefit of being a nimble startup. You need procurement-approved vendors, SOC 2 paperwork, a security review, an admin maintaining the thing who isn't the founder. At that scale you buy DocSend, and that's the right call.

When that overhead approaches zero, the build-vs-buy calculus shifts. Things that used to be obviously buy — because they weren't worth a developer-week — become obviously build. And what you build is yours: shaped to your brand, integrated with your stack, free to evolve when needs change.

2. The Company Brain

The most ambitious piece is what Tom Blomfield is calling a company brain in YC's latest RFS — the missing layer between scattered company knowledge and AI agents that can actually act on it. The same RFS frames the integration side of this as an AI Operating System for Companies: every channel queryable, the company itself becoming a closed-loop system. An AI Chief of Staff, if you will. And that's exactly what we've built at CodeWall.

It monitors every email arriving at the company, every Teams call I take, every Calendar invite, every LinkedIn message, every client Slack workspace — and processes all of them against a living knowledge base of everything else that's happening.

The knowledge layer is built on the approach Andrej Karpathy described in his LLM-maintained wiki. Every person gets a page, every company, every deal, every topic, every meeting. As I write this, that's 400+ people, 260+ companies, and 80+ topics — all cross-linked. The agent reads and writes these pages continuously, and knowledge compounds over time rather than decaying in a thread you'll never reopen.

When a new email arrives, the system already knows who the sender is, what company they're at, who introduced them, what the last conversation covered, and what's outstanding. If they're new, an agent spins up to do the legwork — LinkedIn profile, recent posts, X/Twitter, the company website, mutual connections, anything publicly relevant — and a person and company page exist by the time the email surfaces in my inbox, with the relevant context already pinned to the top. LinkedIn messages flow through the same enrichment — sender profiled, prior context linked, a draft reply waiting.

When a calendar invite lands, attendees are pre-researched, the agenda anticipated, and a prep brief is ready before I sit down. When a Teams call ends, the transcript flows through the same pipeline — attendees identified, action items extracted, wiki pages updated, follow-ups created.

When a message lands in a client Slack — a question in #support, a thumbs-up on a feature request, a casual mention of a competitor — it's tagged against the right account, linked to the relevant deal or topic, and surfaced if it needs my attention rather than getting lost in the noise.

Every interaction feeds the same picture, and the picture gets richer with every input. This is surfaced through a widget that sits right on my desktop, showing me what's important and what needs to get done — with the company brain in full context.

What This Looks Like In Practice

I like to show, not tell. So here are three examples:

- An email came in from a partner at a Big 4 firm about a speaking engagement with several people in copy. The system processed the email and, as part of its research on each person, identified that one was part of the Cloud Security Alliance with a background in standards work. It cross-referenced that against an industry standards initiative I'd been building separately and instantly flagged the connection. Instead of "reply to this email", the follow-up action was: this person's standards background is directly relevant to your certification council, here's how to position the conversation, and here's the strategic value. That direct follow-up got him on the Founding Council. I would have missed that.

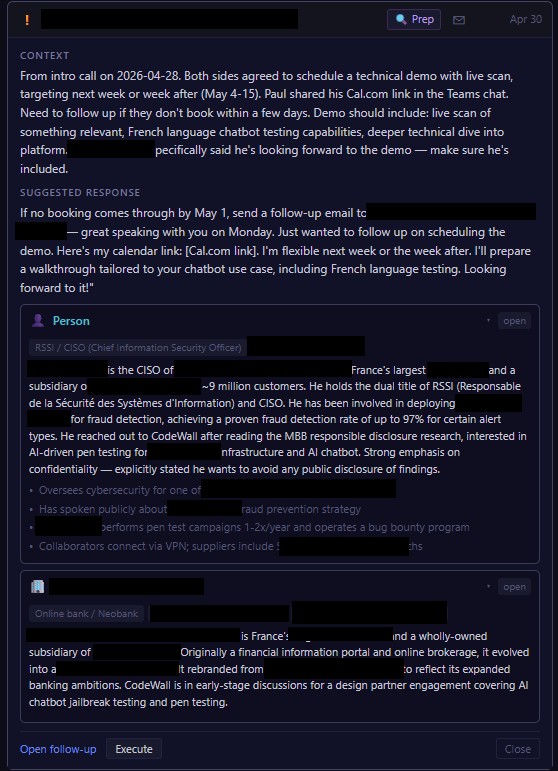

- A CISO at a digital bank booked a cold-call after reading our research pieces. Minutes after the call ended, the system had processed the Teams transcript, built him and his bank wiki pages from public material, and pulled the demo scope he'd asked for (live scan, French-language chatbot testing). Todos created for what I'd committed to. A follow-up email drafted referencing specifics from the call — pre-staged to send if no booking lands by May 1. If he books, the note updates. If May 1 passes silently, the draft surfaces to the top of my list, already waiting.

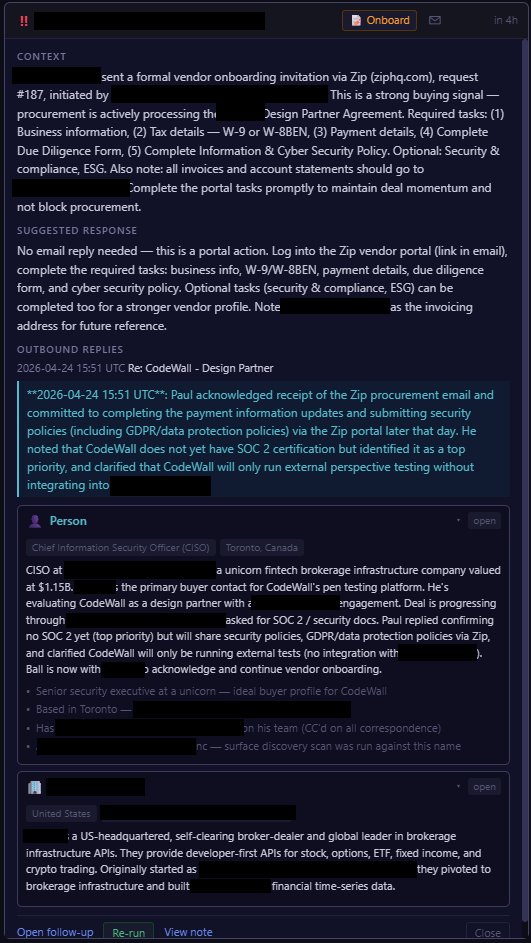

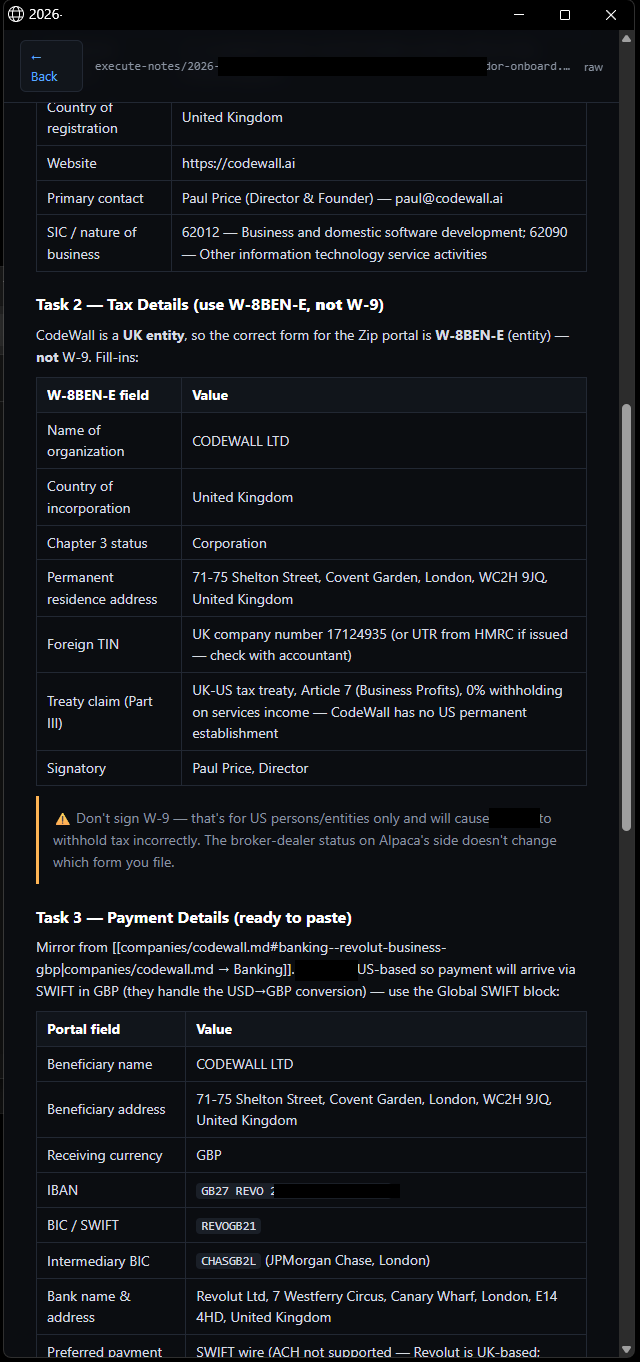

- A client kicked off procurement onboarding through their portal. Five tasks: business info, tax, payment, due diligence, cyber security policy. The system flagged the email as a buying signal and produced a single execute note with everything I'd need to paste — UK entity details, company number, banking block, and the right tax form for the situation (W-8BEN-E, not W-9, because we're a UK entity selling into the US). It flagged the trap too: don't sign W-9, that's for US persons only. What would have taken me over an hour took about five minutes.

(And countless other examples. Happy to share more if you drop me a line; [email protected])

The question I started with was "who should I hire?" The better question was "what does this job actually consist of and can an agent do it?"

Fewer Engineers != Not Less Product

A VC reviewing our pre-seed plan flagged the team composition as unusual: more GTM hires than engineers. At this stage, most companies are still hardening a v0.1 into a real product with founder-led sales — the GTM build-out comes later, at late seed or Series A. It's a fair pushback, and a sensible default for most teams.

The reason the default doesn't apply here is the same argument I've been making the whole way through. AI changes what each engineering headcount has to do. The dataroom you saw above, built in an afternoon. The chief-of-staff system that compounds context across every conversation, built in half-a-day. A new CodeWall platform feature a client asked for on a call, shipped to production under an hour. Each of these would have been an engineering project in a previous era; now they're features you ship between sales calls. The work that used to be split across three engineers genuinely fits into one person's day.

The work that doesn't compress the same way is the human-facing work. Sitting with a CISO and translating their threat model into an evaluation plan. Walking a procurement team through a SOC 2 attestation. Negotiating a design partner agreement. AI helps with the prep and the artifacts, but it doesn't replace the person in the room or on a video call at 10PM.

So the ratio inverts. Engineering gets smaller because each engineer covers more ground. GTM gets larger because customer-facing work scales with the size of the customer, not with the size of the engineering team behind it. A solutions engineer, a customer success lead, an account executive — these aren't roles AI subsumes. They're roles AI enables, by freeing up the engineering capacity that would otherwise have been the bottleneck.

Where This Ends Up

That's why the EA never got hired and the SaaS contracts never got signed. The same logic applies to every other role I thought I'd need next. One $200/month Claude Max subscription has quietly replaced what would otherwise be a CRM, a dataroom platform, a meeting-prep tool, a procurement automation platform, and the engineering hours to glue them all together.

That's what building an AI-native company looks like.